In-place and out-place Numpy functions

To apply the same operation on all values in the Numpy array we can apply functions over the Numpy array at once. This operation is called a vectorized operation. But this operation can be performed in-place or out-place. What is the difference?

Out-place functions are performed on data from one array and the results are stored in another array.

In-place operations are performed over the data in the array and the results are stored in the same array.

To understand the difference between these two types of functions, let's calculate the same mathematical expression in two different ways and let's apply it to a very large numpy array.

\[ B = \sin(\sin(\sin(\sin(\sin(\sin(\sin(\sin((A^A)^{(A^A)})))))))) \]

This function is not very sensefull, but nice for explaining the idea of in-place and out-place Bumpy functions.

Out-place style coding

Let's create an out-place style function for numpy calculations. We have numpy array frm and we will apply the described above function on it and store the final result into an array to

def outplace_func(frm):

to = np.sin(np.sin(np.sin(np.sin(np.sin(np.sin(np.sin(np.sin((frm**frm)**(frm**frm)))))))))

return toIt is easy to write and the code looks simple and self-explanatory. But how this code is working? This is a big issue - for every step, Python creates a temporary array, the same size as tmp and stores calculations there, and repeats it for every function.

In-place style coding

Now an in-place function. First of all, we need to copy frm array to a to array and then start to perform calculation with it:

def inplace_func(frm):

# copy array

to = np.array(frm)

# calculate power

to **= to

to **= to

# Calculate in-place SIN() for 8 times

for _ in range(8):

np.sin(to, out=to)

return toThe code looks much longer and more complicated, but in fact, this code is more optimized, because, during its execution, no temporary arrays in the memory will be produced. Let's compare them on a large array. After each operation I do perform sleeping for 5 seconds, to observe the memory changes with outside monitoring tools. The array for calculation will be 25_000x25_000 elements.

A = np.random.random([25000, 25000])

time.sleep(5)

t = time.time()

B = outplace_func(A)

print(f"Out place: {time.time()-t}sec")

time.sleep(5)

del B

time.sleep(5)

t = time.time()

B = inplace_func(A)

print(f"In place: {time.time()-t}sec")

time.sleep(5)

del B

time.sleep(5)

del A

time.sleep(5)This code will be executed with the following command and then the results will be observed with the next command::

mprof run np_memory.py

mprof plot

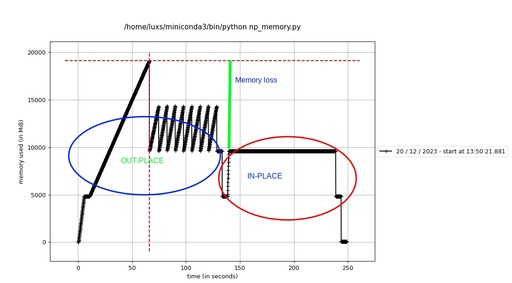

The real-time observation of the memory consumption by Python with out-place and in-place functions.

Original image: 1097 x 607

First of all, the execution of this code reveals, that the out-place function takes 117.9 sec and the in-place function takes 95.3 sec to execute. So, the in-place function takes 1.23x less time than the out-place function for the same task. Almost a quarter! But the memory consumption looks more dramatic! An out-place function consumes a lot of memory on temporary arrays, rather than an in-place function never creates any temporary arrays.

Therefore, I would like to conclude - if you can, you should always use in-lace Numpy functions!

Published: 2023-12-25 01:47:21

Updated: 2023-12-25 01:48:38